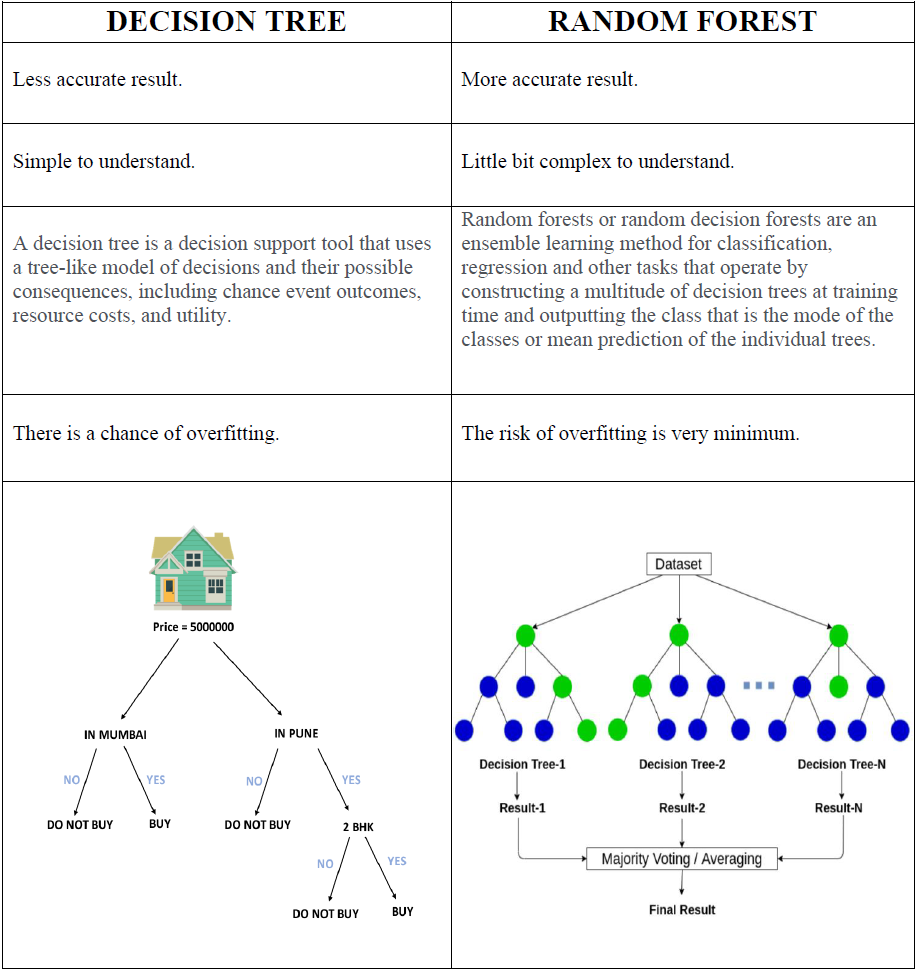

Ensemble MethodsĮnsemble methods are algorithms that combine multiple algorithms into a single predictive model in order to decrease variance, decrease bias, or improve predictions.Įnsemble methods are usually broken into two categories: In the special case of regression trees, they also can only predict within the range of labels that they’ve seen before, which means that they have explicit upper and lower bounds on the numbers they can produce. While great for producing models that are easy to understand and implement, decision trees also tend to overfit on their training data-making them perform poorly if data shown to them later don’t closely match to what they were trained on. Is the petal width less than or equal to 1.65 ? Is the petal length less than or equal to 4.95 ? Is the petal width less than or equal to 1.75 ? Is the petal width less than or equal to 0.8 ? Observation 2Ī flower with a petal width of 0.9, petal length of 1.0, and a sepal width of 3.0. Observation 1Ī flower with a petal width of 0.7, petal length of 1.0, and a sepal width of 3.0. Now, we can use this decision tree to classify new observations. To read this tree, start from the top white node, using the first line to determine how the decision was made to split the current observations into two new nodes. In this tree, the decision for determining the species of an iris is as follows: Model.fit(iris_data.data, iris_data.target) Here is an example as applied to the iris data set: from sklearn.datasets import load_irisįrom ee import DecisionTreeClassifier,export_graphviz The general idea is that given a set of observations, the following question is asked: is every target variable in this set the same (or nearly the same)? If yes, label the set of observations with the most frequent class if no, find the best rule that splits the observations into the purest set of observations. In these models, a top down approach is applied to observation data. In order to understand a random forest, some general background on decision trees is needed.Ĭlassification and Regression Tree models, or CART models, were introduced by Breimen et al. These characteristics make random forests a great starting point for any project where you’re building a predictive model or exploring the feasibility of applying machine learning to a new domain. They perform well with mixed numerical and categorical data, don’t require much tuning to get a reasonable first version of a predictive model, are fast to train, are intuitive to understand, provide feature importance as a feature of the model, are inherently able to handle missing data, and have been implemented in every language. Random forests don’t make any strong assumptions about the scale and normality of incoming data. Due to their simple nature, lack of assumptions, and general high performance they’ve been used in probably every domain where machine learning has been applied. The “forest” in this approach is a series of decision trees that act as “weak” classifiers that as individuals are poor predictors but in aggregate form a robust prediction.

The random forest, first described by Breimen et al (2001), is an ensemble approach for building predictive models.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed